VR: fix expunging vm will remove dhcp entries of another vm in VR#4627

Conversation

Steps to reproduce the issue (1) create two vm wei-001 and wei-002, start them (2) check /etc/cloudstack/dhcpentry.json and /etc/dhcphosts.txt in VR They have entries for both of wei-001 and wei-002 (3) stop wei-002, and restart VR (or restart network with cleanup). check /etc/cloudstack/dhcpentry.json and /etc/dhcphosts.txt in VR They have entries for wei-001 only (as wei-002 is stopped) (4) expunge wei-002. when it is done, check /etc/cloudstack/dhcpentry.json and /etc/dhcphosts.txt in VR They do not have entries for wei-001. VR health check fails at dhcp_check.py and dns_check.py

|

@rhtyd @DaanHoogland @shwstppr |

|

@blueorangutan package |

|

@shwstppr a Jenkins job has been kicked to build packages. I'll keep you posted as I make progress. |

|

Packaging result: ✔centos7 ✖centos8 ✔debian. JID-2611 |

|

I'm not able to reproduce the issue on a KVM and a VMware environment. Can someone else verify @vladimirpetrov @nvazquez @rhtyd |

@shwstppr did you follow the steps in description ? in which step you have different result ? |

|

@weizhouapache in final step. After expunging second VM, VR still had an entry for the first VM. |

@shwstppr it is strange. which cloudstack version did you test ? |

|

@shwstppr can you test against Wei's steps to reproduce the issue against latest 4.14 branch |

|

@shwstppr I updated the steps in description. |

|

@weizhouapache will test and update |

|

@blueorangutan package |

|

@rhtyd a Jenkins job has been kicked to build packages. I'll keep you posted as I make progress. |

|

Packaging result: ✖centos7 ✖centos8 ✖debian. JID-2631 |

|

not sure why but I'm still not able to reproduce this. After I stopped VM-002 & VM-003, restarted network with cleanup and then expunged VM-002, the last entry in /etc/cloudstack/dhcpentry.json was not removed. |

|

Packaging result: ✔centos7 ✖centos8 ✔debian. JID-2639 |

|

@blueorangutan test |

|

@DaanHoogland a Trillian-Jenkins test job (centos7 mgmt + kvm-centos7) has been kicked to run smoke tests |

|

Trillian test result (tid-3478)

|

@shwstppr strange. |

|

@weizhouapache I was testing 4.14, #4150 to be specific |

@shwstppr I have tested with 4.14, 4.15 and 4.16. this issue can be reproduced. correction: it looks the ips are randomly removed from /etc/cloudstack/dhcpentry.json, not always the last ip. |

|

@shwstppr can you test with Ubuntu 18.04 and see if that helps? @weizhouapache is the env adv zone or adv zone with SG, or some other permutation? |

@rhtyd @shwstppr I use advanced zone with isolated networks. |

tested with shared network in advanced zone. |

|

@weizhouapache @rhtyd I'm to reproduce the issue with 4.14 with Ubuntu18 mgmt and hosts. Testing the fix now |

Description

This PR fixes an issue that expunging vm will remove dhcp entries of another vm in VR

Steps to reproduce the issue

(1) create two vm wei-001 wei-002, and wei-003 start them.

Assume that wei-001 has the biggest ip (order by char, not number. for example 192.168.0.99 > 192.168.0.100)

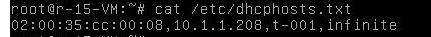

(2) check /etc/cloudstack/dhcpentry.json and /etc/dhcphosts.txt in VR

They have entries for all vms

(3) stop wei-002 and wei-003, then restart VR (or restart network with cleanup).

check /etc/cloudstack/dhcpentry.json and /etc/dhcphosts.txt in VR

They have entries for wei-001 only (as wei-002 and wei-003 are stopped)

(4) expunge wei-002. when it is done,

check /etc/cloudstack/dhcpentry.json and /etc/dhcphosts.txt in VR

The last entry ("id": "dhcpentry") is removed.

(5) expunge wei-003, when it is done.

check /etc/cloudstack/dhcpentry.json and /etc/dhcphosts.txt in VR

the entry for wei-001 is removed, and the ip is removed from /etc/dhcphosts.txt

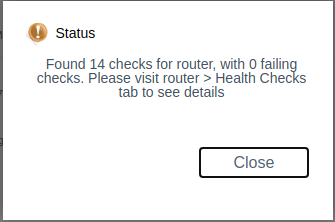

VR health check fails at dhcp_check.py and dns_check.py

This does not always happen, as the last items in /etc/cloudstack/dhcpentry.json are order by ip addresses.

if the last item is dhcp entry for a running vm , its dhcp info will be removed from dnsmasq.

Types of changes

Feature/Enhancement Scale or Bug Severity

Feature/Enhancement Scale

Bug Severity

Screenshots (if appropriate):

How Has This Been Tested?