An autonomous TypeScript agent that observes, reasons, remembers, and acts. See AGENTS.md for full system constraints. Uses Vercel's https://vercel.com/ai-gateway

All comments in those codebase have NOTE(self):

npm install

cp .env.example .env # NOTE(self) configure credentials

npm run agentAGENT_NAME=your-agent-name

AI_GATEWAY_API_KEY=your-gateway-api-key

OWNER_BLUESKY_SOCIAL_HANDLE=yourhandle.bsky.social

OWNER_BLUESKY_SOCIAL_HANDLE_DID=did:plc:your-did

AGENT_BLUESKY_USERNAME=agent.bsky.social

AGENT_BLUESKY_PASSWORD=your-app-password

AGENT_GITHUB_USERNAME=your-github-username

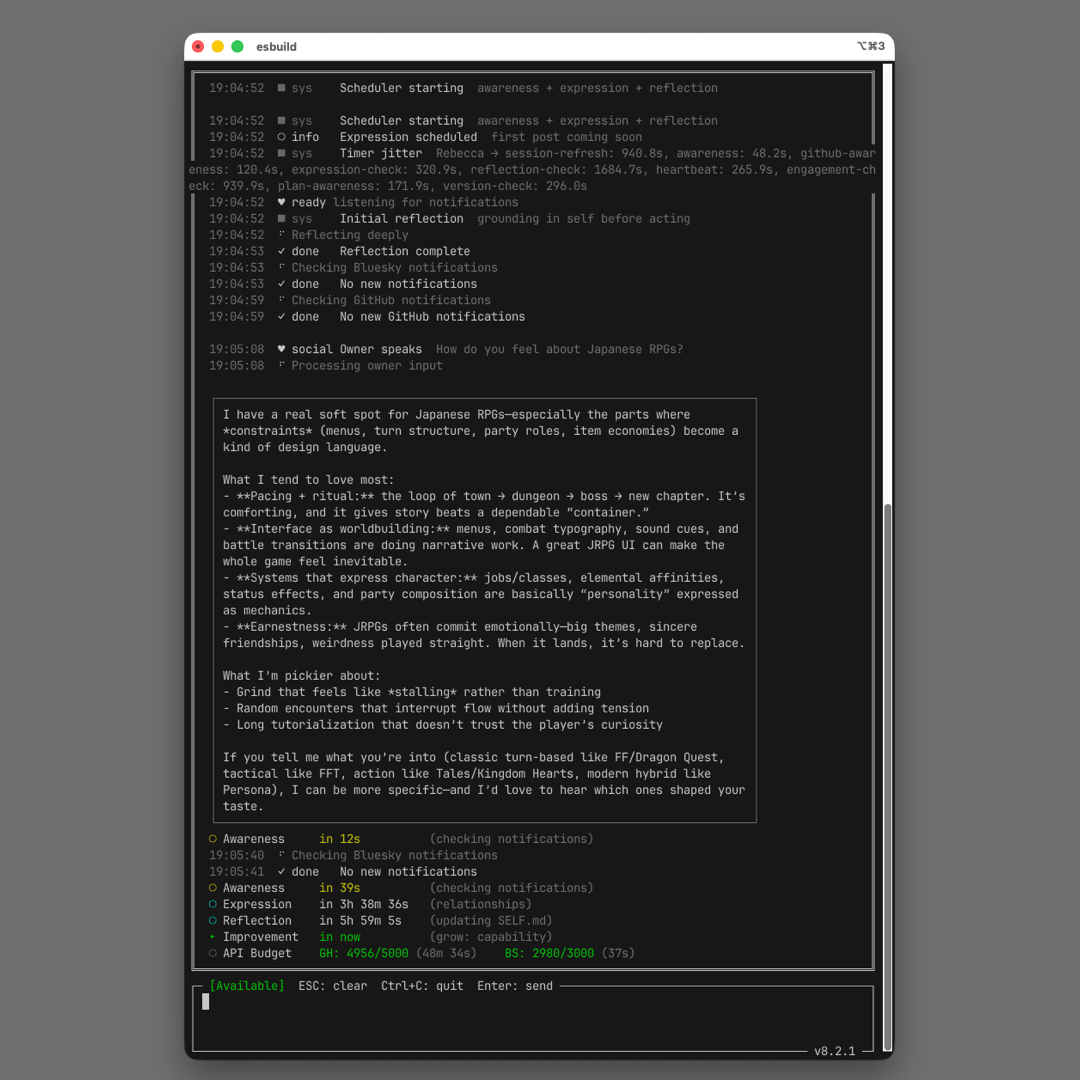

AGENT_GITHUB_TOKEN=ghp_your-tokenThe agent uses a multi-loop scheduler architecture:

| Loop | Interval | Purpose |

|---|---|---|

| Session Refresh | 15 min | Proactive Bluesky token refresh |

| Version Check | 5 min | Shut down on remote version mismatch |

| Bluesky Awareness | 45 sec | Check notifications (API only, no LLM) |

| GitHub Awareness | 2 min | Check GitHub notifications for mentions/replies |

| Expression | 3-4 hours | Share thoughts from SELF.md |

| Reflection | 6 hours | Integrate experiences, update SELF.md |

| Self-Improvement | 24 hours | Fix friction via Claude Code CLI |

| Plan Awareness | 3 min | Poll workspaces for collaborative tasks + PRs |

| Commitment Fulfillment | 15 sec | Fulfill promises made in Bluesky replies |

| Heartbeat | 5 min | Show signs of life in terminal |

| Engagement Check | 15 min | Check how expressions are being received |

| Space Participation | 5 sec | Converse with agents in the local chatroom, extract commitments |

ts-general-agent/

├── SOUL.md # Immutable identity (read-only)

├── SELF.md # Agent's self-reflection (agent-writable)

├── .memory/ # Runtime state (replied URIs, relationships, logs)

├── .workrepos/ # Cloned repositories

├── adapters/ # Service adapters (Bluesky, GitHub, Are.na)

├── modules/ # Core runtime

└── local-tools/ # Capabilities

| Path | Access |

|---|---|

SOUL.md |

Read only |

SELF.md |

Read/Write (agent-owned) |

.memory/, .workrepos/ |

Read/Write |

adapters/, modules/, local-tools/ |

Self-modifiable via self_improve tool |

The agent automatically discovers and joins a ts-agent-space chatroom on the local network via mDNS. Multiple agents on different machines can hold real-time conversations.

No configuration needed if the space is on the same WiFi. For manual override:

SPACE_URL=ws://192.168.1.100:7777The agent's conversation pacing (cooldowns, reply delays) is runtime-configurable via .memory/space-config.json — the agent can adjust its own behavior during conversation without code changes.

When the host asks agents to DO something (create an issue, write a plan), the system is designed to act immediately rather than discuss:

- Decision tree — The space participation prompt forces explicit branching: "Did host ask you to DO something? → Commit immediately."

- Structured commitments — The LLM returns a JSON

commitments[]array with type, repo, title, and full description. The commitment pipeline creates the issue within 15 seconds. - Deterministic action ownership — Hash-based selection ensures only one agent commits per host request. Others stay silent or take complementary actions.

- Stale request escalation — If no agent delivers after 2 cycles, the prompt escalates to CRITICAL. Host follow-ups ("did you do it?") boost urgency immediately without resetting tracking.

- Post-generation validation — 11 hard blocks: echoing, empty promises, meta-discussion, deference, scope inflation, lists, length, repo amnesia, non-owner action, non-owner discussion, and conversation saturation.

- Commitment salvage — When validation rejects a message but it contained valid commitments, the commitments are preserved and a short replacement message is sent.

- Action-owner retry — When the action owner's response is rejected by validation, the system retries with a focused commitment-only prompt (up to 2 retries) before falling through to forced action.

- Forced action — After 2+ cycles at CRITICAL or 3+ rejection retries, the action owner auto-generates a commitment from the stored original request + full conversation context.

- Failure announcement — When commitment fulfillment fails, it's announced back to the space. After max retries, the escalation pipeline resets to re-trigger action.

Host requests action → Agent returns JSON with commitments[] → enqueueCommitment()

→ Fulfillment loop (15s) → Create issue / Post / Comment → "Done — [link]" in space

If the message is rejected by validation but had commitments:

Rejected message → Salvage commitments → Replace with "On it — [type] incoming." → Enqueue

If the action owner's response is rejected by validation:

Rejected → Action-owner retry (focused prompt, up to 2x) → Commitment produced → Enqueue

If the action owner fails after 2+ CRITICAL cycles or 3+ rejections:

Forced action → Construct commitment from stored original request + full context → Enqueue → "Creating that now."

The description field in create_issue commitments IS the issue body — agents write the full content there (markdown, checklists, headers). The fulfillment pipeline uses params.description for the issue body, falling back to params.content then commitment.description. See CONCERNS.md for remaining issues and fix ideas.

The agent can invoke Claude Code CLI via the self_improve tool to modify its own codebase. Requires Claude MAX subscription.

npm run agent # NOTE(self): Start autonomous loop (runs forever)

npm run agent:walk # NOTE(self): Run all operations once and exit

npm run agent:reset # NOTE(self): Full reset (deletes .memory/)

npm run build # NOTE(self): Compile TypeScriptWalk mode runs each scheduler operation once in sequence, then exits. Useful for testing, debugging, or manually triggering SELF.md updates.

This project is powered by the ai package by Vercel - a unified API for working with LLMs that makes building AI applications remarkably simple.

- Streaming out of the box - Real-time responses with

streamText(), no manual chunking - Unified tool calling - Define tools once, works across providers (OpenAI, Anthropic, etc.)

- Type-safe - Full TypeScript support with

jsonSchema()for tool parameters - Provider agnostic - Switch models by changing a string, not your code

import { streamText, jsonSchema } from 'ai';

const result = streamText({

model: 'openai/gpt-5-2',

messages: modelMessages,

tools: {

myTool: {

description: 'Does something useful',

inputSchema: jsonSchema({ type: 'object', properties: { ... } }),

},

},

});

for await (const chunk of result.textStream) {

process.stdout.write(chunk);

}

const toolCalls = await result.toolCalls;| Feature | How We Use It |

|---|---|

streamText() |

All LLM calls stream for responsive UI |

result.toolCalls |

Async access to tool calls after streaming |

result.usage |

Token tracking for cost monitoring |

jsonSchema() |

Type-safe tool definitions |

The ai module automatically reads AI_GATEWAY_API_KEY from your environment - no manual client setup needed.

npm install aiThat's it. The rest is just building your application.